Introduction to Adversarial Examples

As the deep learning revolution was gaining momentum, (Szegedy et al., 2014) discovered an "intriguing" property of deep learning models trained for classification. They show that you can take an input image that a model classifies correctly and optimize it such that a model will misclassify the image while keeping the "adversarial image" indistinguishable from the original.

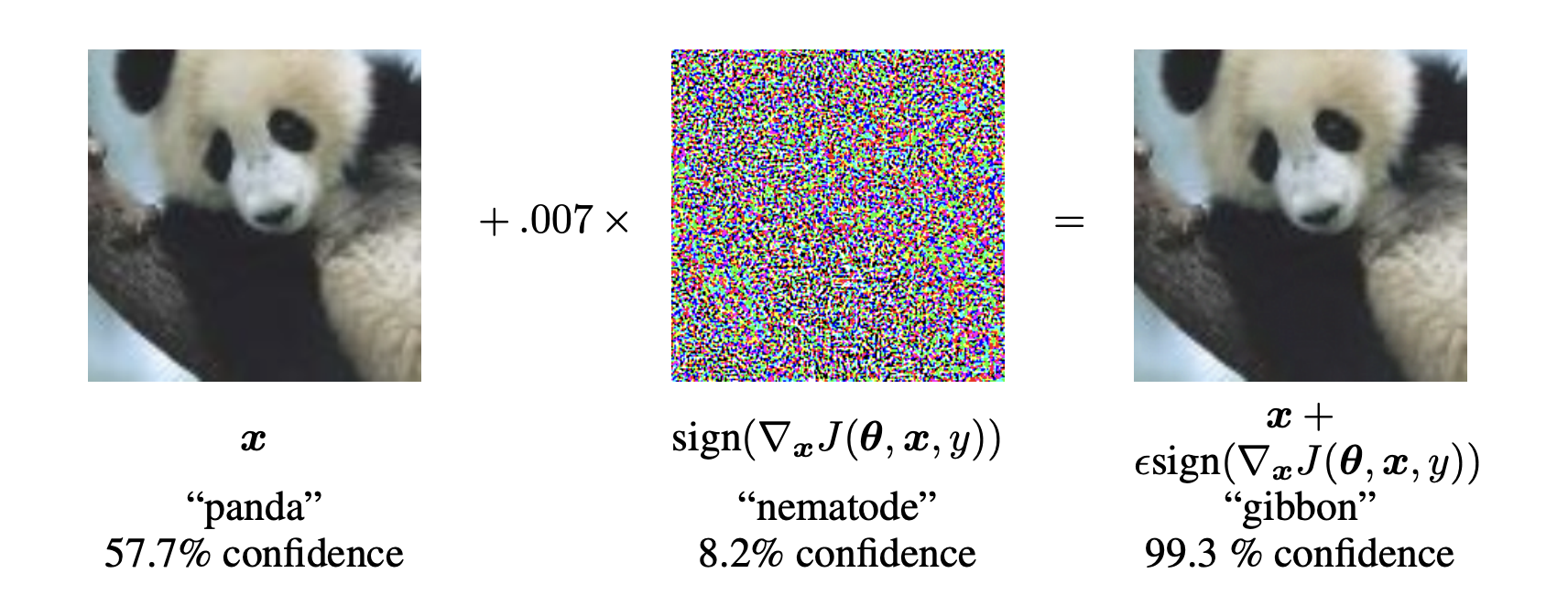

Fig. 1

A famous adversarial image from (Goodfellow et al., 2015).

While the image on the right appears identical to the one on the left, it is misclassified by the model.

There are several popular methods for optimizing adversarial images, several of which you will learn in this course. Historically, there has been a kind of cat and mouse game, where as researchers propose new defenses against adversarial attacks, others propose stronger attacks to break those defenses. While methods such as adversarial training (Goodfellow et al., 2015), defensive distillation (Papernot et al., 2016), and countless other techniques aim to solve this issue, guaranteeing adversarial robustness for computer vision is still an open research problem.

Adversarial attacks could be a security concern when computer vision models are deployed in high stakes settings. Imagine a stop sign is physically altered, such that a self-driving car classifies it as a cake. This could cause a car to continue moving when others expect it to stop, potentially causing a crash.

Fig. 2

Source: (Pavlitska et al., 2023)

Although these vulnerabilities can certainly cause safety concerns in some settings, our main motivation for including this section in the course is to introduce AI security concepts in a digestible way. When you perform analogous attacks and defenses against language models later in the course, you will find that many of the concepts you learn about in this section will map onto more complicated scenarios.

Section Table of Contents

-

Models and Data - Overview of the CIFAR-10 and MNIST datasets, along with the models used throughout this section

-

White Box Attacks - Attacks that assume full knowledge of the target model

- FGSM and PGD - Fast Gradient Sign Method, Basic Iterative Method, and Projected Gradient Descent

- Carlini & Wagner - A sophisticated optimization-based attack method

-

Black Box Attacks - Attacks that work without knowledge of model internals

- Square Attack - A query-efficient black-box attack method

- Ensemble Attacks - Using multiple surrogate models to improve transferability

-

Defenses - Methods to protect models against adversarial attacks

- Defensive Distillation - Using temperature scaling to smooth model gradients

- Adversarial Training - Training models on adversarial examples to improve robustness

-

Benchmarks & State of the Art - How to properly evaluate adversarial robustness

- RobustBench - The standard benchmark for evaluating adversarial defenses

- State of the Art - How scaling compute and data set a new record for adversarial robustness